Wherein I list some (mostly) recent happenings, ramble a bit, and provide links, in an order roughly determined by importance and relevance to particle physics.

- Obvious big news item of the week is the release of the Joint Analysis of BICEP2/Keck Array and Planck Data (also on arXiv now), which derives an upper limit on the tensor-to-scalar ratio r<0.12 at 95% C.L., perfectly consistent with r=0. So, no evidence for primordial gravitational waves (yet). This is somewhat different from the original BICEP2 result r=0.20+0.07-0.05 with r=0 disfavoured at 7.0σ (lest we forget the YouTube reveal). That paper (from March last year) can be found on the arXiv. It is less than a year old with already almost 1000 citations! (And nature has a rundown on that). The important caveat can be found at the end of the abstract, emphasised after peer review and acceptance by Physical Review Letters: "Accounting for the contribution of foreground dust will shift this value downward by an amount which will be better constrained with upcoming data sets." Well, now we know that amount...

Of course, this basic conclusion has been known for a while. Rumours began to circulate by May that the effect of polarised emission from the galactic dust foreground was problematic, that it was estimated (and misinterpreted) from preliminary figure in a slide shown at a conference. That month a couple of papers appeared on the arXiv arguing the point. Nevertheless the BICEP2 paper was accepted in June with the added caveat I mentioned above.

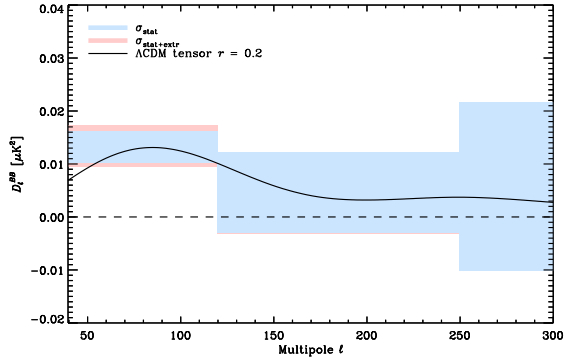

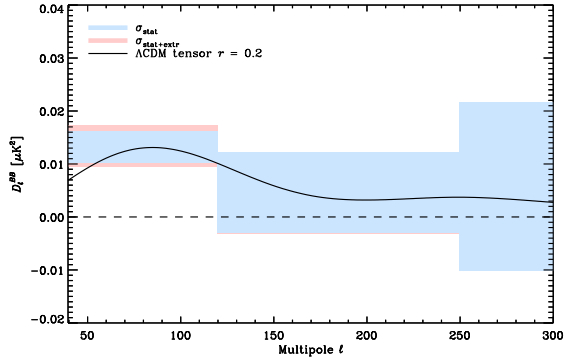

In September Planck released their study of the polarised dust emission, showing that the effect of dust was likely of the same order of magnitude as the effect measured by BICEP2. There are some very good blog entries on this, see Sean Carroll, Katie Mack, In The Dark, Blank On The Map, Excursionset, Resonaances, etc. The plot below was enough to convince mostly everyone that the dust could account for all of the signal; it shows the "amount of B-mode polarisation" versus multipole moment, with blue the expected dust component and black the best fit theoretical prediction from gravitational waves claimed by BICEP2.

The book was almost shut, but Planck reminded us that this was an extrapolation from a high frequency region to the lower frequency which BICEP2 observed. We were told to be patient physicists until the joint analysis was complete. The release was pushed back and back, but now here we are... primordial gravitational waves at r=0.2 are dead. So it goes.

The upshot is that we do have gravitational lensing modes at 7.0σ! But wait, that number is familiar... Also,

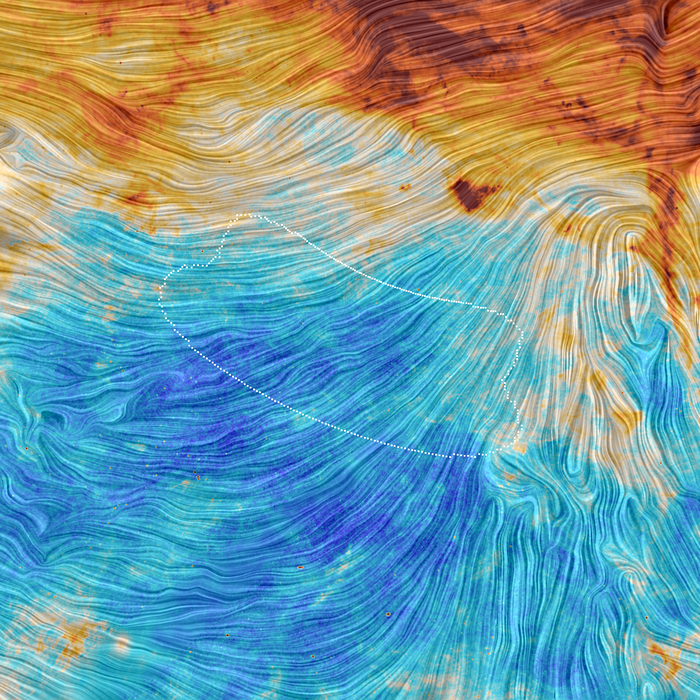

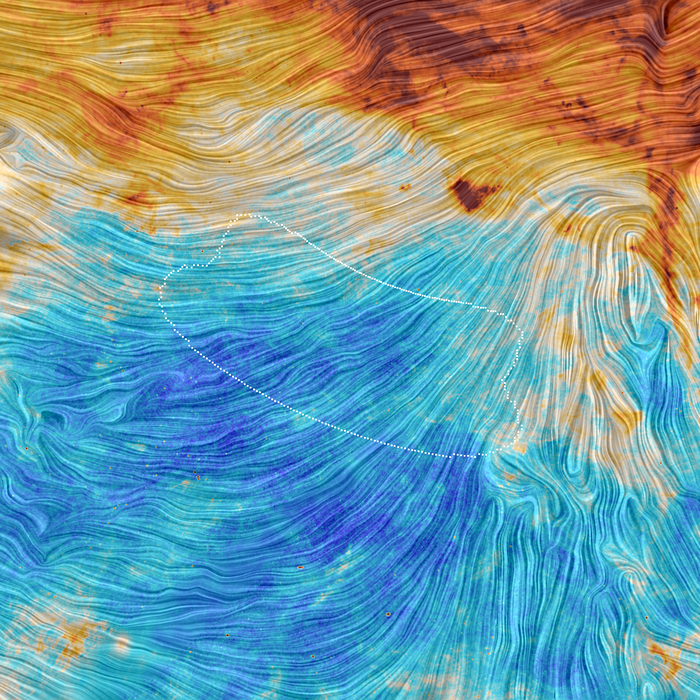

the following image was released which is just stunning and certainly worth a stare. The colour scale is for dust emission, and the texture is the orientation of the Galactic magnetic field. Outlined region is the BICEP2 patch.

So what's next?

Resonaances has a blog about that. Any non-zero measurement of r in the future will still be big news, and there are many experiments which will soon be sensitive to r~0.01. Certainly we could still see a primordial gravitational wave signal in the coming few years. Once again, we must sit and be patient physicists...

- Today was the 2015 release of Planck full mission data products and scientific papers. The press release is here. The result they are spinning is that Planck measures the beginning of reionisation at 560 million years after the big bang, significantly later than the WMAP measurement of 420 million years. This is more consistent with observations from Hubble of the earliest galaxies (300-400 million years); now there's enough time for these structures alone to inject the energy needed to end the dark ages. I'm sure we will hear more about all their results in the coming week(s).

They also released the full map in hi-res of the polarised emission from Milky Way dust, reminiscent of Van Gogh:

- I missed this last week but ATLAS has released evidence for the Higgs-boson Yukawa coupling to tau leptons (actually there were quite a few releases, which is just the wrapping up of the remaining Run-I analyses). They measure a signal strength μ=1.43+0.43−0.37, and an excess of events over the expected background from other Standard Model processes with an observed (expected) significance of 4.5 (3.4) standard deviations. So they got somewhat lucky. Here's the plot:

CoEPP has been involved in some of this analysis and I have seen a few talks on it in the past. I am always amazed, when I see the histograms before the BDT (and even after the BDT in each channel) that they are able to dig out this signal at all. Just look at this histogram of an important BDT input variable from one of the better channels, τlep+τhad:

Doesn't look too bad, until you see that the signal histogram is presented 50x larger than it really is, just so you can see it. Obviously the experimentalists have plenty of tricks up their sleeves which are especially powerful when you know exactly what you're looking for. It's a remarkable analysis. And to be honest, if we required a local p-value of 5σ to "discover" the Higgs, when we didn't know its mass, then h→ττ with a significance of 4.5σ, when we know exactly where it should be... that's discovery in my book.

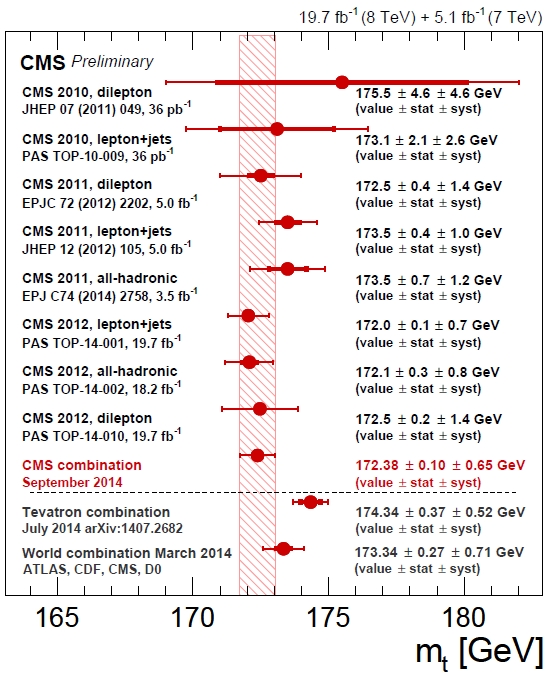

- DZERO submitted the more detailed documentation on their top mass measurement originally published as a letter back in May. Their measurement is 174.98±0.76 GeV. They say in the abstract, "This constitutes the most precise single measurement of the top-quark mass," but that is no longer true. As far as I am aware that honour goes to CMS, with a measurement of 172.38±0.10(stat)±0.65(syst) GeV. (Evidently the LHC has a lot of top statistics!) Read more about that at Tommaso's blog post from four months ago. The Tevatron continues to pull the world average measurement up.

- CMS released a new preprint: Search for supersymmetry using razor variables in events with b-tagged jets in pp collisions at √s = 8 TeV. The search constrains gluinos and stops.

It is cool to see these razor variables in action! They're a very nice method for isolating new physics signals with pair-produced particles each decaying to visible+invisible. Other variables (which may be more familiar) are MT2, MCT and MCT⊥. (ATLAS used MT2 for their top squark search). The difficulty for such searches is how to deal with the fact that, in an event, we cannot know the momentum vectors of the invisible particles individually, but only reconstruct the total missing transverse momentum vector. So the general idea has been to construct kinematic variables which are an approximation of the mass scale of the underlying event, and which have a kinematic end-point (a maximum possible value) defined by the masses of the particles involved. The endpoint is exact at the parton truth-level but ends up being smeared by showering and detector effects. Above some e.g. MT2_{cut} there are expected to be very few SM events; usually MT2_{cut} ≈ m_W or m_t. Signal events will accumulate beyond MT2_{cut} since new physics is expected to have larger masses. Thus these variables are a very nice way to eliminate SM background in these kind of searches, applicable for R-parity conserving SUSY models with neutralino dark matter candidates, and leptoquarks.

But they are not perfect. A problem with MCT for example is that the kinematic endpoint actually depends on the centre-of-mass of the pair-produced particle system. (This was eliminated with MCT⊥). Also, a lot of events end up piled at ~0 for both MCT and MT2. The razor approach avoids this problem by boosting from lab-frame to the particle-pair centre-of-mass frame with a "best guess" boost, and constructing a kinematic variable there. In general it performs at least as good as MCT⊥ and MT2 (see below).

And they now have a successor: super-razor variables. Super-razor variables are designed to increase sensitivity in searches for specific decay topologies. To construct these variables one iteratively boosts from reference frame to reference frame with "best guesses", and at each stage you get some information about the masses (and mass splittings) involved. For example, in dislepton production where each slepton decays to a lepton and neutralino, there are three interesting frames to boost to. One advantage of this approach is that, along the way, you reconstruct some extra information such as decay angles which you can then use to help discriminate your signal. Click and look at the figure below to see its power...

I am not aware of any ATLAS/CMS search which has employed the super-razor variables yet, but I look forward to seeing it in Run-II.

- My supervisors and I have a letter paper out on the arXiv today. It is a short analysis of naturalness in the three-flavour Type I see-saw model, with the following take-home message: standard hierarchical thermal leptogenesis is unnatural, and there's no way out in the minimal model. I will write a short post about it next week some time.

- According to a new arXiv preprint, there is no indirect dark matter signal from the Large Magellanic Cloud. The following figure tells the tale:

The investigators expected to begin to probe the areas (see the brazil lines) of parameter space interesting for the galactic centre excess (four marked areas), but they aren't quite there yet. Matthew Buckley (one of the investigators) has some tweets about it (reading upwards, beginning Feb 5).

[Edit 16/02: I read this paper in a little more detail last week. It is the first indirect dark matter search in the LMC, certainly worth doing as it is potentially the second brightest (after the galactic centre) annihilation source in our sky. However unlike dwarf galaxies there is a lot of baryonic matter to contend with; the authors use a data-driven method to model this. They end up seeing a broad excess which is consistent within systematic limitations of the background model (they stress this point, so it is not taken as further evidence for the Hooperon!). It should be noted that the above plot (their Fig. 22) is conservative in terms of the statistical analysis and choice of LMC centre, but it is optimistic in the halo profile. Assuming an NFW or Isothermal (cored) profile weakens these limits by an order of magnitude (see their Figs. 17 and 18). The analysis appears to be difficult yet worth attempting, unfortunately it cannot compete with the limit from dwarf galaxies, especially after Fermi Pass 8.]

- Could the missing satellite problem be solved with just dark energy? This paper on the arXiv suggests the possibility, and new scientist ran a story. This is surprising, since I would have thought that this is already taken into account in simulations? What am I missing?

- James D. "BJ" Bjorken was one of the two winners of the Wolf Prize in Physics this year. It is known as somewhat of a predictor for the Nobel. (Brout, Englert and Higgs were recipients in 2004). You can read about it at the official site, and also at Tomasso's blog. The former writes, "in retrospective, Bjorken's scaling not only led to the discovery of quarks, but also pointed the direction toward the mathematical framework governing all fundamental interactions."

- On Wednesday, Roman prosecutors closed the case on the disappearance Ettore Majorana. Majorana, who disappeared at sea in 1938 at 32 years of age, is now believed to have been alive and well, living in Valencia, Venezuela, between 1955-59.

- I only just read that as of January 1, Physical Review journals and Physical Review Letters will allow article titles in the reference list. Huge.

- Jester noted that the Symposium on Lepton Photon Interactions resembles a vomiting dragon. I'll let you to make up your own mind...

- The Crayfis app for detecting ultra-high energy cosmic rays using a network of smartphones is already in beta testing. Read more about it from Kyle Cranmer in a blog post, or see the original paper from October last year.

- On YouTube (or similar):

- Delta Institute for Theoretical Physics has put up videos of the History (and Future) of Dark Matter Symposium. They're from 2013. Speakers are Bertone, Peebles, Turner, Bosma, Sadoulet, Silk, and White.

- SLAC uploaded a public lecture on the hunt for new physics at the LHC.

- Perimeter Instutute hosted a public talk from Kendrick Smith on Cosmology in the 21st Century.

- Space.com have released a 25min documentary on the Laser Interferometer Gravitational-wave Observatories (LIGO) called LIGO Generations, a follow-up to LIGO, A Passion for Understanding.

- Sixty Symbols has a few videos on the Multiverse, interviews with Prof Laurence Eaves and Prof Mike Merrifield, and yesterday Dr Tony Padilla on Max Tegmark's levels of Multiverse.

- There is a NASA JPL video of the 325m diameter asteroid (and its 70m moon!) which flew past Earth last week, at about three times the Earth-moon distance.

- Hubble caught a triple transit of Jupiter's largest moons on 23 Jan and released a video yesterday.

- Numberphile on the randomness of a coin toss (spoiler: it's not 50/50).

- Physics Girl on Newton's Amplifier and supernovae.

- Veritasium on Do Cell Phones Cause Cancer? (Feat. Physics Girl, who I am seeing a lot more of since her video on pool vortices [>3m views now]).

- Bad Astronomy discusses a new video of the Earth in infrared, produced from images taken by geostationary satellites. I followed some links and found some videos from the same user, James Tyrwhitt-Drake, who also has a (frankly terrifying) timelapse of the Sun, and a stunning timelapse of the Earth, both in 4K.

- Goldpaint Photography has released a short film, Illusion of Lights: A Journey into the Unseen, which has some incredible shots of the night sky. Watch in HD!

- The "Climate Denial Crock of the Week" has started its Climate Change Elevator Pitch series.

- Astronaut Terry Virts posted this Vine on Tuesday, a timelapse of an orbit on the ISS without a sunset!

- On Monday, NASA released its fiscal year 2016 budget request.

- The exciting news: there is a request for $30mil to begin planning a mission to Europa! Bad Astronomy claims that the request has a decent shot, too, since it has a champion in Congress. Looks like this has been in the works for a while, JPL released somewhat of a promotional video for such a mission back in November.

- The sad news: the budget also suggests that there may be plans to cease Mars rover Opportunity operations. Meanwhile NASA JPL released a YouTube video celebrating 11 Years of Opportunity on Mars (the images of clouds at 0:46 really got me). Why stop now!

- NASA has successfully launched the SMAP (Soil Moisture Active Passive) satellite observatory, which will gather three years of data on global soil moisture levels via a very cool-looking 6m rotating reflector. You can read about it at space.com or at NASA, and watch the launch here. It is the last of five Earth-observing space missions to be launched in the past year by NASA (including: Orbiting Carbon Observatory-2, Global Precipitation Measurement Core Observatory, ISS-RapidScat, and Cloud-Aerosol Transport mission).

- The US (Republican-led) Senate on 21 July passed an amendment to a bill 98-1 which stated: "It is the sense of the Senate that climate change is real and not a hoax." However they rejected, 50-49 with a requirement for 60, the stronger amendment: "It is the sense of Congress that 1) climate change is real, and 2) human activity significantly contributes to climate change." Senator Inhofe, who once claimed that global warming was "the greatest hoax ever perpetrated on the American people" claimed: "The hoax is that there are some people who are so arrogant to think that they are so powerful they can change climate. Man can't change climate." I'll leave that alone...

- "Computational Linguistics Reveals How Wikipedia Articles Are Biased Against Women", as an article here or on the arXiv.

- Last but certainly not least, here is some old news that I only recently discovered... an arXiv paper on predicting the length of winter in the world of Westeros. From the abstract: Thus, by speculating that the planet under scrutiny is orbiting a pair of stars, we utilize the power of numerical three-body dynamics to predict that, unfortunately, it is not possible to predict either the length, or the severity of any coming winter. We conclude that, alas, the Maesters were right -- one can only throw their hands in the air in frustration and, defeated by non-analytic solutions, mumble "Coming winter? May be long and nasty (~850 days, T<268K) or may be short and sweet (~600 days, T~273K). Who knows..."

No comments:

Post a Comment